By Christian Hansen

Performance marketing is currently facing a crisis of confidence. We see growth teams shifting budgets back into "brand," Top-of-Funnel experiments, or one-off stunts because their primary performance channels feel increasingly unreliable.

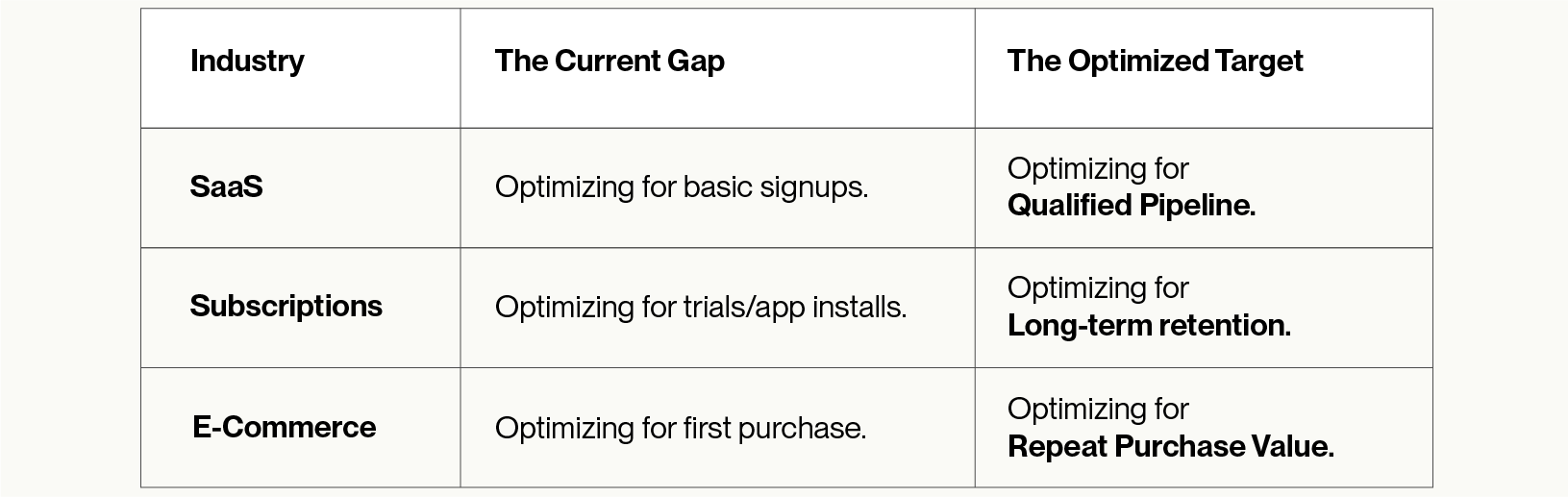

The common diagnosis is that "the algorithm has changed." But the reality is a signal misalignment. Most teams are feeding world-class ad platforms low-resolution targets.

At Churney, we view this as a Signal Optimization problem. We aren't trying to outsmart the platforms; we are helping them work better by giving them a high-definition target to hit.

A common mistake in internal builds is treating Predictive Lifetime Value (pLTV) as a standard machine learning problem. In a lab, you optimize for accuracy. In production, you are designing a continually adapting control system.

The pLTV model is not the end goal; it is a training signal for a black-box model. This changes the objective entirely:

Once a pLTV signal is deployed, the environment immediately changes. User behavior shifts as your product, pricing, and onboarding evolve, while platform algorithms adapt to the very signal you are sending.

"You aren’t maintaining a static predictor; you are operating a system that must be retrained, recalibrated, and monitored continuously," says Brian Brost, CTO at Churney. "Feature and target distributions drift, and the 'meaning' of pLTV drifts as your acquisition mix changes. This is a primary engineering responsibility, not a 'set and forget' task."

Ad platforms operate on an asymmetry of information. They know which users are "active spenders" across the web—making those users highly competitive and expensive—but they don't know who is specifically valuable to your unique product. To bridge this gap and help platforms bid effectively on intent rather than just general profile, we use two core signal engineering principles.

Ad platforms are designed to allow value updates that increase a prediction, but they rarely allow you to decrease a value once it has been sent.

There is a constant tension between Certainty and Delay.

The most dangerous failures in pLTV are slow and expensive to detect because of endogenous feedback loops.

In our experience, 6 to 12 months is a realistic timeline for an internal system to become reliably net-positive—if the team manages to map all the failure modes.

We describe Churney as the Signal Optimization Layer because we close the gap between what the platform sees and what the business cares about.

We are not trying to "beat" the algorithms of Meta or Google; we are helping them work better. Each platform has non-standardized requirements: different event schemas (CAPI), timing constraints, and update rules.

Most of this knowledge isn't in a manual; it’s accumulated through thousands of live experiments. By using a production-hardened system, you gain a "North Star" benchmark. Even for teams that eventually build in-house, having a sophisticated benchmark allows you to see exactly how much money you might be leaving on the table during the "learning" phase of a DIY system.

The platforms are ready to find your best customers. We just give them the high-definition signal they need to get the job done.

Your data warehouse has incredible value. Our causal AI helps unlock it.