By Noy Rotbart

Hightouch recently published a piece that gave a name to something our industry has been circling for years: signal engineering. Their argument is simple: as ad platforms automate more of the bidding, targeting, and creative process, the marketer’s job shifts from pulling levers to feeding the machine. The quality of your conversion signals becomes your competitive advantage.

We agree. And we’d go further.

Signal engineering as a discipline is real. It matters. But naming the discipline is different from mastering it. The Hightouch piece lays out a useful maturity model, from server-side tracking all the way to predictive signals. It’s a good map. But we’ve spent the last several years living inside that territory, and we’ve learned that the gap between Stage 3 (value-differentiated signals) and Stage 5 (predictive signals) is where most teams get stuck. Not because they lack infrastructure, but because the problem changes shape once you’re actually in it.

This piece is about that gap. Not how to pipe data to ad platforms. That problem is increasingly solved. This is about how to design the signal itself so it actually works in production.

The most common mistake we see in pLTV implementations is treating the predictive model as the finish line. A team invests months building an accurate predictor of lifetime value, celebrates a low RMSE, ships it to Meta or Google via Conversion API, and waits for ROAS to improve. And then performance goes sideways.

A pLTV model that’s accurate in a notebook is not the same thing as a pLTV signal that works in an auction. The model is a component. The signal is a system: the model, the transformation logic, the timing of events, the interaction with platform learning dynamics, and a feedback loop that never stops moving. Across hundreds of live experiments, we’ve found that how the signal is activated on the ad platform is at least as important as the offline performance of the underlying model.

This is a point that gets missed in a lot of signal engineering discussions. Hightouch describes the anatomy of strong signals as completeness, business alignment, and timeliness. Those are necessary conditions. But they’re not sufficient. The signal also needs to be stable under auction pressure, and that’s a property that doesn’t show up in any offline evaluation metric. The value you report to the platform is not a revenue forecast. It’s a shaped artifact, designed to teach the platform’s learning algorithm what “valuable” looks like for your business. Getting the shape right is the hard part.

Ad auctions are adversarial environments. You’re not making predictions in a vacuum. You’re making predictions that determine how much you bid, which determines who you acquire, which determines the data your model trains on next.

This trips up even sophisticated data science teams. If your model overestimates a user’s future value, you bid more aggressively for that user. You’re more likely to win the auction, precisely because other advertisers, bidding on observed behavior rather than predictions, are passing on them. Your “accurate” model is now systematically acquiring the users it’s most wrong about.

We’ve seen this play out in practice. One mobile app we work with saw their pLTV campaign targeting higher-value users, but at costs so high that the ROAS actually dropped below their baseline. The fix wasn’t a better model. It was compressing the range of predictions to reduce overbidding on the top end. Only after that adjustment did the campaign start outperforming, eventually reaching +45% ROAS lift.

This is why we think of signal design as a control system problem rather than a prediction problem. The goal isn’t to minimize error in a static sense. It’s to produce a signal that remains directionally stable as the platform adapts to it and your acquisition mix shifts underneath.

In practice, this means we often transform raw predicted values into logarithmic scales or rank-based scores. We apply what we call the pessimism principle: start conservative and upgrade predictions as behavioral evidence accumulates, rather than leading with optimistic projections the platform will happily over-bid on. Ad platforms readily accept value increases. They rarely let you walk a value back down.

Building pLTV in-house sounds logical. It often isn’t.

The trap is subtle: standard correlational models optimize for early engagement signals. Do that long enough, and you’ll teach Meta and Google to find your “best” users. People who binge your onboarding but never pay. The proxy becomes the product, and the product breaks.

Scaling profitably means moving past prediction and into causality. Three things matter here. First, distribution shift is real and most models ignore it. When you change your acquisition strategy, you change who you acquire. Correlational models don’t know that. Causal models do. They account for the fact that the population shifts as you scale, so your targeting doesn’t silently degrade while your dashboard looks fine.

Second, cohort logic and user-level logic are not the same thing. Cohorts are great for budget allocation. Not for tROAS bidding. Precision value-based optimization requires user-level predictions. Individual forecasts, not averages. Conflating the two is one of the most common and costly mistakes we see.

Third, miscalibrated signals are worse than no signals. Ad platforms learn from what you feed them. Overestimated predicted values means overbidding, means volatile performance, means your team concludes pLTV doesn’t work and retreats to optimizing for registrations. The signal needs to be calibrated to actual business outcomes. Not to what looks good in a spreadsheet.

The Hightouch maturity model is linear: Stage 1, then 2, then 3, all the way to Stage 5. That makes sense as a planning framework. But once a predictive signal goes live, the environment stops being linear.

The moment you deploy a pLTV signal, the platform changes who it sends you. Your incoming user distribution shifts. Your model, which was trained on pre-signal data, is now making predictions about a population it hasn’t seen before. If you retrain on post-signal data without accounting for this, you’re training on data your own signal caused. The model drifts. The signal drifts. And by the time you notice, often weeks later because learning cycles run 7 to 14 days minimum, real budget has been misallocated.

Here’s what this actually looks like. We recently worked with a leading subscription company on Meta. Launched the experiment on February 10th. By February 20th, first signal change: results weren’t good enough, had to recalibrate to drastically downgrade monthly subscribers versus yearly payers. March 4th, another signal change: still not consistently good enough, had to fine-tune the trade-off between sending the signal early (which the platform needs) versus waiting for enough behavioral evidence (which the model needs). By mid-March, three weeks and three signal iterations in, the campaign was finally outperforming the baseline consistently. The client is now asking to double the budget.

That timeline is normal. Not because anyone made a mistake. Because the system is adaptive and the only way to calibrate it is to run it, observe how the platform responds, adjust, and run it again. Each iteration costs real money and real time. This is the iteration penalty that makes in-house pLTV so expensive. We’ve seen 6 to 12 months as a realistic timeline for an internal system to become reliably net-positive, and that’s if the team correctly identifies every failure mode along the way.

This is where the signal engineering conversation needs to evolve.

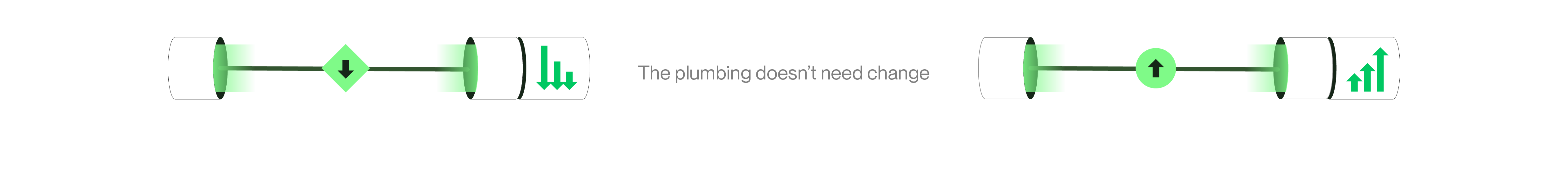

The infrastructure side of the problem, getting data from warehouses to ad platforms reliably, in the right format, within attribution windows, is increasingly solved. Tools like Hightouch, along with native CAPI integrations and CDPs, have made the plumbing dramatically better. That’s a genuine achievement and it’s unlocked a lot of value.

But the infrastructure is only as good as what flows through it. A perfectly reliable pipe that delivers a poorly designed signal will reliably produce poor results. The hard part isn’t the activation. It’s the signal design: the modeling, the calibration, the continuous adaptation, and the platform-specific knowledge of how each ad network’s learning algorithm actually responds to the values you send it.

Each platform has non-standardized requirements. Different event schemas, timing constraints, value update rules. We’ve had cases where the platform’s own guidance contradicted what actually worked. Meta told us a certain signal timing approach wouldn’t help on their value optimization products. It appeared to have made a difference anyway. The only way to know was to run the experiment. Most of this knowledge isn’t documented anywhere. It’s accumulated through thousands of live experiments and hundreds of thousands of dollars in learning spend.

When the signal is right, the numbers are real. Zapier saw a 190% improvement in ROAS on Meta after we replaced their in-house pLTV signal with ours. Not because their data science team was bad. Because the signal design for auction optimization is a fundamentally different discipline than building a good predictive model.

Headway saw +45% ROAS. Podimo, +35%. Underoutfit, +33%. A gaming studio saw their Churney campaign deliver 2.4x the revenue per user compared to their baseline, but only after the cohorts had two weeks to mature. In the first week, the baseline looked stronger. Patience and the right feedback loop turned it around.

One number we find particularly telling: across our portfolio, we consistently see pLTV-acquired cohorts grow 30% from Day 14 to Day 30, compared to roughly 17% for baseline cohorts. The signal doesn’t just acquire better users up front. It acquires users whose value compounds.

Signal engineering is becoming table stakes. Within a few years, every serious performance marketing team will be sending some form of value-differentiated or predictive signal to their ad platforms. The question won’t be whether you do signal engineering. It will be how well you do it.

We think the discipline will split into two layers. The first is activation: the infrastructure that moves signals from your data environment to ad platforms. This is where the CDPs and data activation platforms live, and they’ll keep getting better at it.

The second layer is signal optimization: the intelligence that determines what the signal should be, how it should be shaped for each platform, and how it should adapt as conditions change. This is the layer that requires deep expertise in auction dynamics, causal modeling, and the kind of production-hardened iteration that only comes from running thousands of live experiments across hundreds of millions in ad spend.

Hightouch calls signal engineering a practice. We agree. But like any engineering discipline, the practice needs both good tools and deep domain expertise. You need reliable pipes. You also need to know exactly what to put through them.

At Churney, this is the problem we solve. Not the infrastructure. The signal itself: designing it, calibrating it, keeping it stable in production, and continuously adapting it as platforms evolve and your business changes. We’re the signal optimization layer between your data warehouse and the ad platform’s learning algorithm.

The pipes are ready. What flows through them is the hard part.

Your data warehouse has incredible value. Our causal AI helps unlock it.